The questions marketers actually care about don't live in a single dashboard.

"Why did conversions drop while traffic went up?"

That question requires Google Analytics (traffic volume and conversion data), Search Console (which queries are driving that traffic), and Google Ads (whether paid campaigns changed). No single data source has the full answer. The insight lives in the correlation between them.

And yet, every AI tool for marketing today gives you access to one source at a time.

The questions that matter most

After working with 20+ agency clients, we've noticed a pattern. The questions that drive real business decisions almost always span multiple data sources:

Attribution questions:

- "Which channel is actually driving revenue — organic, paid, or referral?"

- "Our Google Ads spend went up 30% but revenue didn't follow. Why?"

- "Are our blog posts generating leads or just traffic?"

Diagnostic questions:

- "Bounce rate spiked on the pricing page. Is it a traffic quality issue or a page issue?"

- "Rankings dropped for our top keyword but traffic is flat. What's compensating?"

- "Cart abandonment went up. Is it a site speed problem, a pricing problem, or a traffic source problem?"

Strategic questions:

- "Should we increase ad spend on branded or non-branded keywords?"

- "Which products should we feature on the homepage based on search demand and sales data?"

- "What content gaps exist between what people search for and what we rank for?"

Every one of these requires correlating data from at least two sources. Most require three or more.

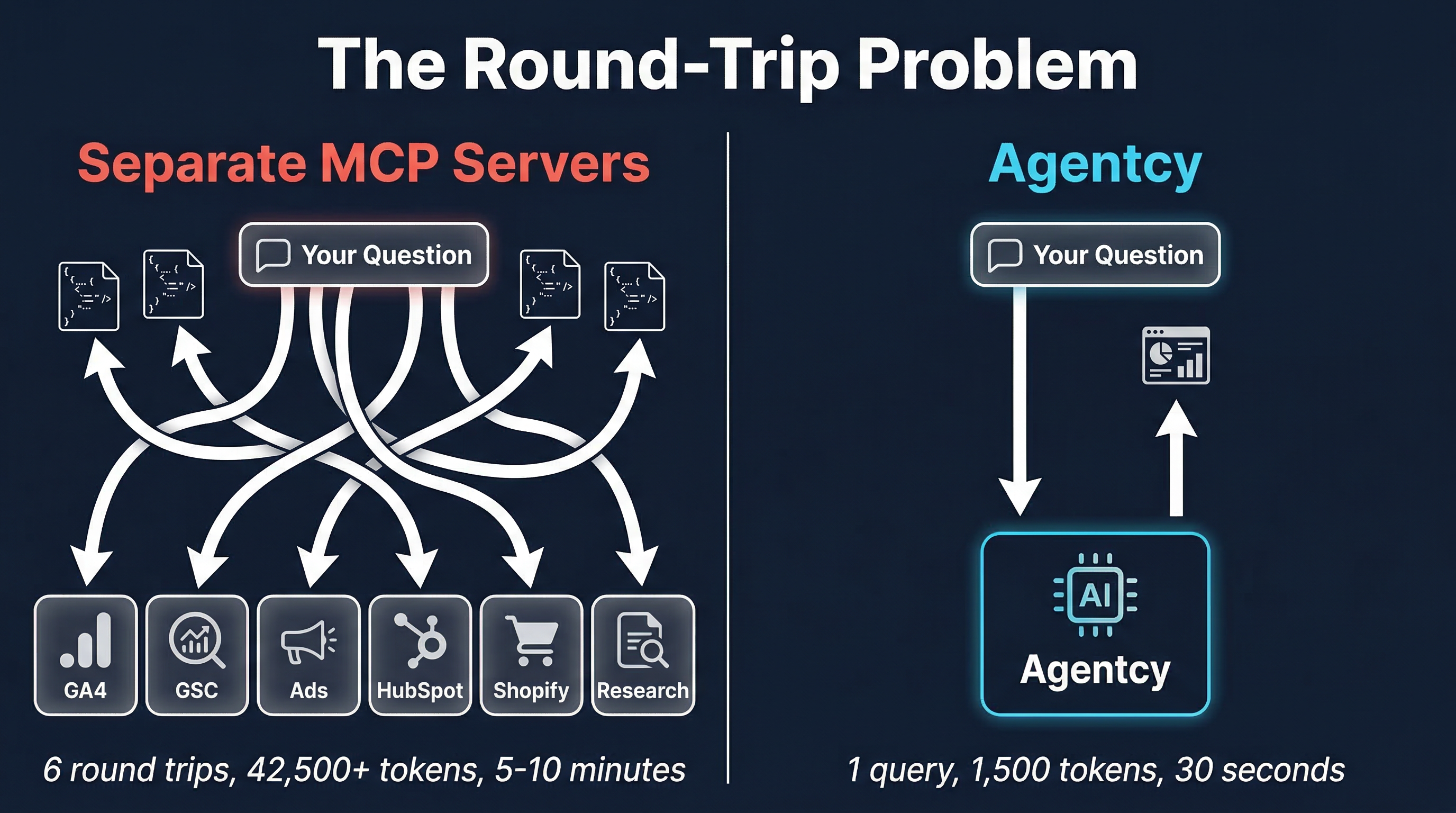

The round-trip problem

With separate MCP servers or standalone AI tools, answering a cross-source question looks like this:

- Ask about GA4 traffic → get raw data back

- Read through the response, mentally note the relevant numbers

- Ask about Search Console rankings → get more raw data

- Read through that response, try to correlate with step 2

- Ask about Google Ads campaigns → even more raw data

- Try to synthesize all three in your head (or ask the AI to, but it's already forgotten the details from step 1)

That's 6 round-trips minimum. Each one burns tokens. Each one risks the AI losing context from earlier responses. By the time you get to the synthesis step, the AI might not have the GA4 data in its context window anymore — it's been pushed out by the Search Console and Ads responses.

Total tokens consumed: 42,500+ across all the raw JSON responses — and that's before the 139,575 tokens of tool definitions already loaded in your context window.

Time spent: 5-10 minutes of copy-pasting and re-prompting.

Quality of insight: mediocre, because the AI never had all the data at once.

What cross-source intelligence looks like

The same question, asked once:

You: "Why did conversions drop this week while traffic went up for aurora-fitness.com?"

The response correlates GA4 conversion data, Search Console traffic sources, and Google Ads campaign changes — in a single query. No round-trips. No manual correlation. No lost context.

The answer might reveal that organic traffic increased (good) but it was driven by informational keywords (people researching, not buying), while the paid campaign that targeted high-intent buyers was paused for budget review. The conversion drop isn't a site problem — it's a traffic mix problem.

That insight would take 30+ minutes to uncover manually across three dashboards. With cross-source intelligence, it takes 30 seconds.

Why this is harder than it sounds

Building cross-source intelligence isn't just about connecting to multiple APIs. The hard problems are:

Knowing which sources to query. When someone asks "why did conversions drop," the system needs to know that GA4 has conversion data, Search Console has traffic quality data, and Google Ads has campaign change data. It needs to route to the right sources without the user specifying them.

Correlating the responses. Raw data from GA4 uses different time formats, metric names, and granularity than Search Console or Google Ads. Someone (or something) needs to align them before synthesis is possible.

Synthesizing with domain expertise. A raw correlation isn't an insight. Knowing that traffic went up while conversions went down is just math. Understanding that this indicates a traffic quality shift — and recommending what to do about it — requires marketing domain knowledge.

This is the difference between a tool that dumps data and a tool that provides intelligence.

The questions you should be asking

If you're evaluating AI tools for marketing, ask these questions:

-

Can I ask a question that spans multiple data sources in a single query? If you have to break it into separate API calls yourself, you're doing the AI's job for it.

-

Does the response include recommendations, or just numbers? Data without context is noise. You need a tool that knows what "good" looks like for your industry and page type.

-

How many tokens does a typical response consume? If a single query returns 25,000 tokens of JSON, multiply that by the number of queries per day, per client. That's your real cost.

-

Can I switch between clients without reconfiguring? Agencies need to move between domains constantly. If switching clients requires a config change, that friction compounds fast.

The future of marketing AI isn't about having access to more data. It's about having a system that understands how all your data connects — and tells you what to do about it.