We spend a lot of time reading what marketers, developers, and agency owners actually say about their tools — in GitHub issues, Reddit threads, Cursor forums, and industry surveys. Not the polished case studies or vendor blog posts. The raw, frustrated, "why doesn't this work" posts.

What we found isn't pretty. But it's honest. And every problem below is one we built Agentcy to solve.

"GA4 sucks"

That's not our opinion. That's a direct quote from Dave Davies, writing in Search Engine Journal. The full sentiment: "I'm not sure who there thought, 'Let's make a lot of the important data hard to access.'"

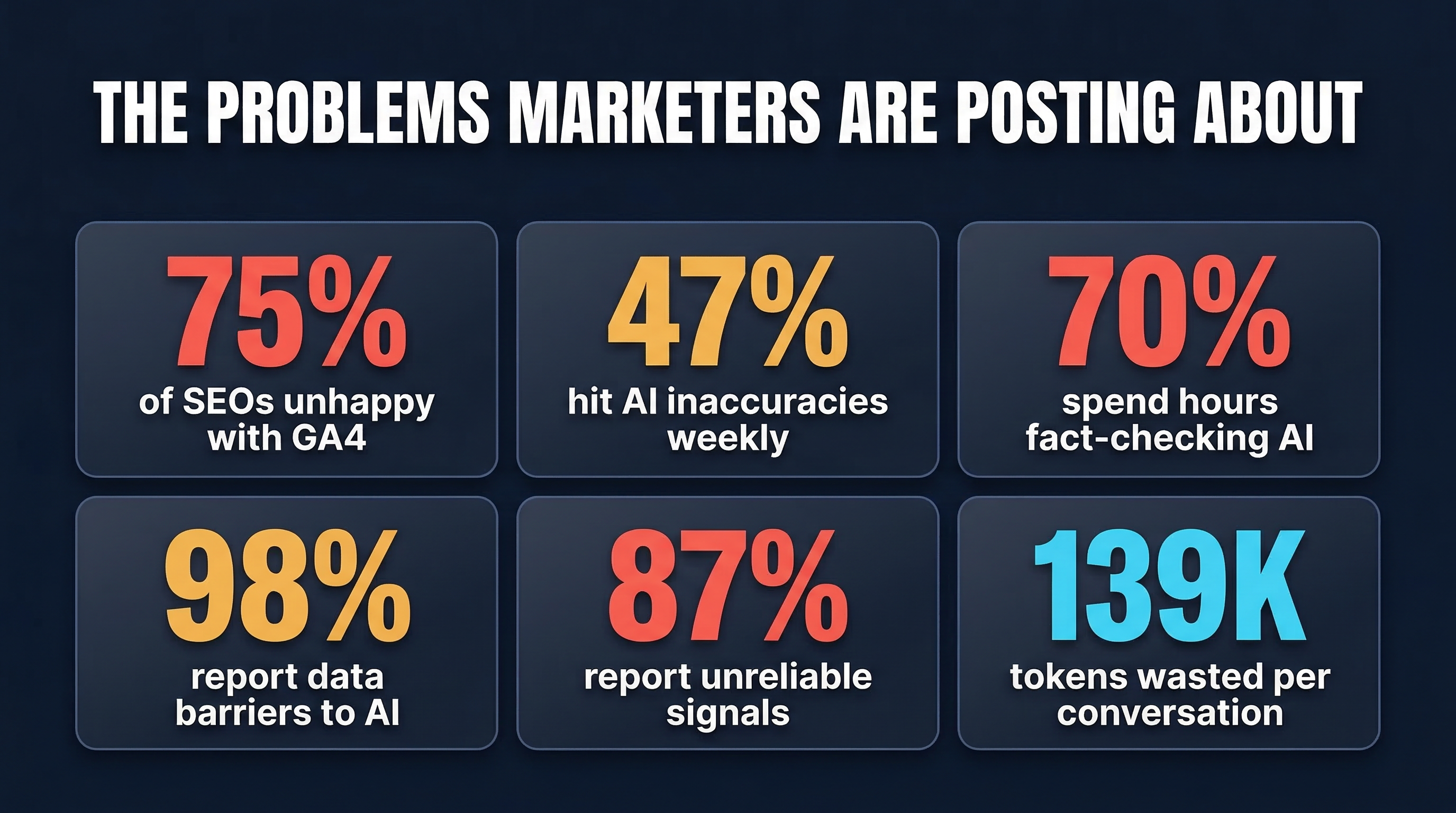

He's not alone. A Search Engine Roundtable poll found that 75% of SEOs are unhappy with GA4. Half said they outright "hate it."

Gill Andrews, a UX consultant, put it this way: "I usually can find my way round any piece of software quickly. But Google Analytics 4 is making me cry."

The Ercule blog captured the core frustration perfectly: "Even though Google keeps telling me that GA4 is more robust... I have no idea how to parse that information. What insights do you have for me, Google?"

The pattern: GA4 has more data than Universal Analytics ever did. But the interface makes it nearly impossible for non-analysts to extract insights. The data exists — it's just locked behind a UI designed for data engineers, not marketers.

What should happen: You should be able to ask "how is my organic traffic performing?" in plain English and get a synthesized answer with specific pages, trends, anomalies, and recommendations — not a 200-row table of dimension values and metric IDs.

"MCP is a context hog"

The MCP token bloat problem is one of the most actively discussed issues in the developer community.

On GitHub, user @rcdailey reported that adding a single MCP server increased their token consumption from 34,000 to 80,000 tokens per session: "Being able to use nearly only half of Claude 4.5's available context for a single MCP tool is just too much."

User @byPawel documented an even more striking example: asking "What's 2+2?" with four MCP servers enabled cost approximately 15,000 tokens and $0.21. Fifteen thousand tokens for a math question a calculator answers for free.

The Speakeasy blog summarized the broader sentiment: "Critics love to point out that MCP is a 'context hog,' burning through tokens at an alarming rate."

Vectara's 2026 predictions went further: "Tool sprawl and MCP proliferation will hit a breaking point in 2026."

The pattern: Every MCP server loads its complete tool definitions into the AI's context window. Tool descriptions, parameter schemas, usage instructions — all of it. A typical marketing setup with separate servers for GA4, Search Console, Google Ads, and a research tool can consume 149 tools worth of definitions. We measured six common marketing MCP servers and counted 139,575 tokens of overhead before the user types a single question.

What should happen: The data layer should be architecturally efficient. Fewer tools, each doing more. Server-side intelligence that routes to the right data sources without exposing dozens of tools to the context window. 4 tools instead of 149. ~587 tokens instead of 139,575.

"40 tools is a pointless limit"

Cursor enforces a practical limit of about 40 MCP tools — a performance threshold beyond which tool routing degrades. Users hit this with just 2-3 servers.

Forum user flowmotion (4 likes): "Just by adding GitHub I fill already around 20 Tools... that's a pointless limit."

User brandoncollins7 (3 likes): "I have to disable the whole thing cause it pushes me over the limit?"

User bsmi021: "It's a pretty dumb restriction, considering even Claude Desktop can handle 100s of tools."

The pattern: The tool limit exists for a reason — more tools degrade AI performance. Eclipse Source's analysis found that "context rot" sets in as tool definitions crowd out working memory. But the limit punishes users who need multiple data sources, which is most marketers.

What should happen: If a marketing platform needs 60 tools across 4 data sources, the architecture is wrong. The right architecture consolidates data access behind fewer, smarter tools. Cursor's 40-tool threshold should feel generous, not restrictive.

"Raw MCP servers just dump the API"

Analytics Edge published a detailed benchmark of existing GA4 MCP servers. Their assessment: existing servers "do little more than expose the raw API methods to the AI with the assumption that the AI will figure it all out."

They measured a 2:1 token ratio between raw responses and optimized ones — and that's a conservative estimate. Our own measurements show ratios of 20:1 or higher when you strip metadata, format values, and synthesize insights.

The pattern: Most MCP servers are thin wrappers around APIs. They pass the raw response through to the AI client. Every property quota object, every metric type annotation, every date in YYYYMMDD format. The AI has to parse, interpret, correlate, and analyze data that was formatted for machines, not for reasoning.

What should happen: The MCP server should be the smartest part of the pipeline, not the thinnest. It should strip irrelevant fields, format values for human readability, correlate across sources, flag anomalies, and provide specific recommendations. The AI client should receive insights, not raw payloads.

"47% of marketers hit AI errors weekly"

An NP Digital study of 565 marketers found that nearly half encounter AI inaccuracies multiple times per week. Over 70% spend hours per week fact-checking AI output. And 36.5% admitted that hallucinated content has been published publicly.

The Supermetrics blog added context: "By the third [mistake], they abandon the tool entirely." And: "Even a small data error can turn into a million-dollar mistake."

The pattern: AI tools hallucinate when they don't have data, and they give bad analysis when they have raw data without context. The solution isn't a better model — it's better data feeding the model. When the AI receives pre-analyzed, contextualized, anomaly-flagged information instead of raw numbers, accuracy goes up dramatically.

What should happen: The AI should analyze your actual data, not generate advice from training data. And the analysis layer between the data and the AI should catch the obvious errors — like flagging a 34.2% bounce rate as healthy on a blog page and concerning on a checkout page — before the AI ever sees it.

"Data silos are the #1 marketing tool problem"

An analysis of 100+ Reddit posts about marketing tool frustrations found a consistent theme: "Most businesses fail not because they lack tools, but because they have too many of the wrong ones."

Industry benchmarks put agency reporting overhead at 15-20 hours per week per reporting cycle — just pulling data from siloed platforms. McKinsey research found knowledge workers spend roughly 20% of their workweek searching for internal information — that's over 10 work weeks lost per year.

Salesforce's 2026 State of Marketing report quantified it: 98% of marketing teams using AI reported at least one data-related barrier — silos, too much data, or poor quality — preventing them from using AI for personalization effectively.

The pattern: Marketing teams manage dozens of tools across their stack. Each one is a silo. Each one has its own login, its own dashboard, its own data format. Pulling data across silos for a single client report is a manual, error-prone process that eats hours of analyst time.

What should happen: One query. All sources. "How is aurora-fitness.com performing this month?" should correlate GA4, Search Console, Google Ads, and WooCommerce data in a single response. No tab-switching, no CSV exports, no manual correlation. The tool should handle multi-tenant access so agencies can switch between clients in the same session without reconfiguring credentials.

The common thread

Every complaint above points to the same gap: the tools exist, but the intelligence layer between data and insight is missing.

- GA4 has the data → but it's trapped in a hostile interface

- MCP connects AI to data → but it dumps raw responses that waste tokens

- Multiple data sources exist → but correlating them is manual and error-prone

- AI models are capable → but they give bad advice when fed bad input

The fix isn't more tools. It's a smarter connection between the tools you already have and the AI you're already using.